4/22/2019

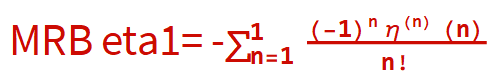

Let

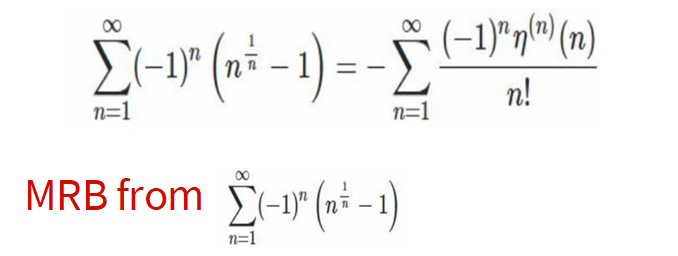

$$M=\sum _{n=1}^{\infty } \frac{(-1)^{n+1} \eta ^n(n)}{n!}=\sum _{n=1}^{\infty } (-1)^n \left(n^{1/n}-1\right).$$

Then using what I learned about the absolute convergence of

$\sum _{n=1}^{\infty } \frac{(-1)^{n+1} \eta ^n(n)}{n!}$ from

https://math.stackexchange.com/questions/1673886/is-there-a-more-rigorous-way-to-show-these-two-sums-are-exactly-equal,

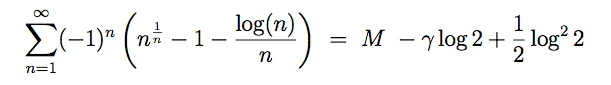

combined with an identity from Richard Crandall:

,

Also using what Mathematica says:

,

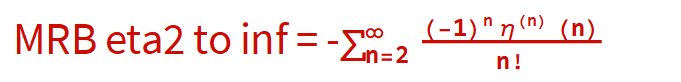

Also using what Mathematica says:

$$\sum _{n=1}^1 \frac{\underset{m\to 1}{\text{lim}} \eta ^n(m)}{n!}=\gamma (2 \log )-\frac{2 \log ^2}{2},$$

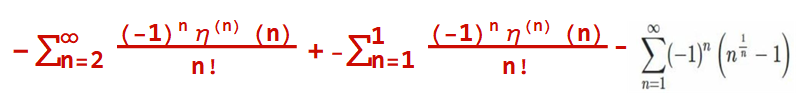

I figured out that

$$\sum _{n=2}^{\infty } \frac{(-1)^{n+1} \eta ^n(n)}{n!}=\sum _{n=1}^{\infty } (-1)^n \left(n^{1/n}-\frac{\log (n)}{n}-1\right).$$

So I made the following major breakthrough in computing MRB from Candall's first eta formula.

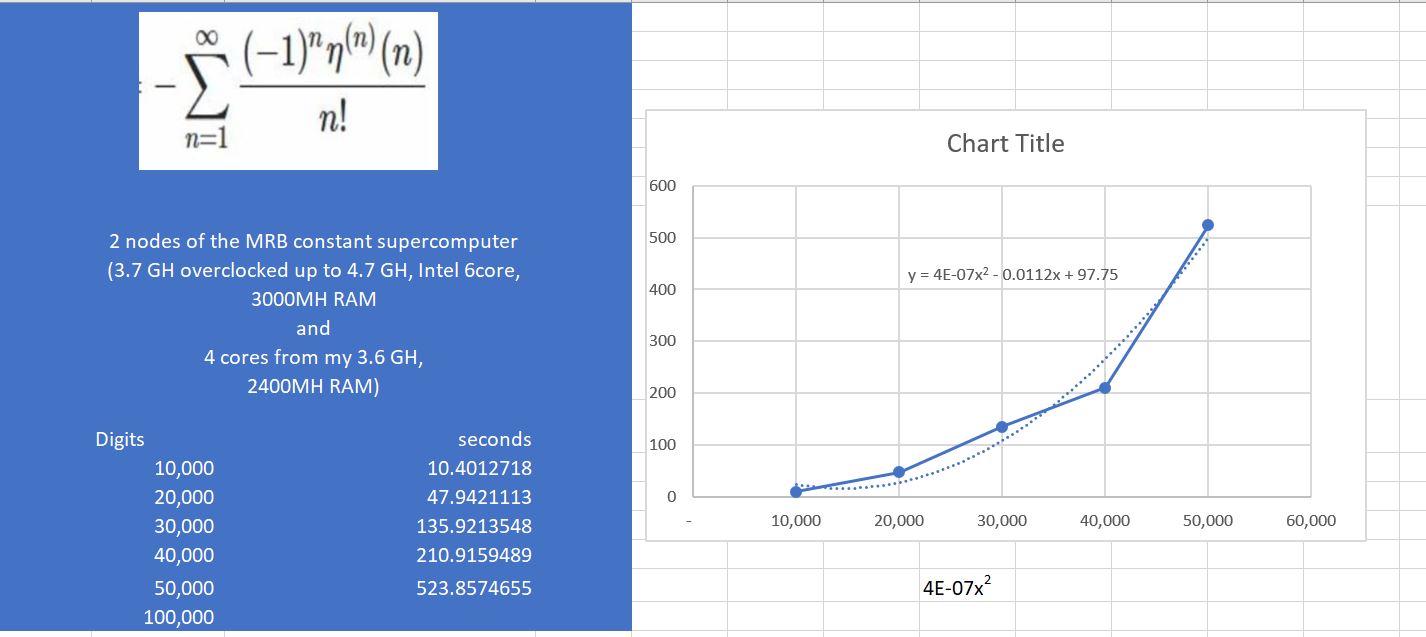

See attached 100 k eta 4 22 2019. Also shown below.

The time grows 10,000 times slower than the previous method!

I broke a new record, 100,000 digits: Processor and total time were 806.5 and 2606.7281972 s respectively.. See attached 2nd 100 k eta 4 22 2019.

Here is the work from 100,000 digits.

Print["Start time is ", ds = DateString[], "."];

prec = 100000;

(**Number of required decimals.*.*)ClearSystemCache[];

T0 = SessionTime[];

expM[pre_] :=

Module[{a, d, s, k, bb, c, end, iprec, xvals, x, pc, cores = 16(*=4*

number of physical cores*), tsize = 2^7, chunksize, start = 1, ll,

ctab, pr = Floor[1.005 pre]}, chunksize = cores*tsize;

n = Floor[1.32 pr];

end = Ceiling[n/chunksize];

Print["Iterations required: ", n];

Print["Will give ", end,

" time estimates, each more accurate than the previous."];

Print["Will stop at ", end*chunksize,

" iterations to ensure precsion of around ", pr,

" decimal places."]; d = ChebyshevT[n, 3];

{b, c, s} = {SetPrecision[-1, 1.1*n], -d, 0};

iprec = Ceiling[pr/27];

Do[xvals = Flatten[ParallelTable[Table[ll = start + j*tsize + l;

x = N[E^(Log[ll]/(ll)), iprec];

pc = iprec;

While[pc < pr/4, pc = Min[3 pc, pr/4];

x = SetPrecision[x, pc];

y = x^ll - ll;

x = x (1 - 2 y/((ll + 1) y + 2 ll ll));];(**N[Exp[Log[ll]/

ll],pr/4]**)x = SetPrecision[x, pr];

xll = x^ll; z = (ll - xll)/xll;

t = 2 ll - 1; t2 = t^2;

x =

x*(1 + SetPrecision[4.5, pr] (ll - 1)/

t2 + (ll + 1) z/(2 ll t) -

SetPrecision[13.5, pr] ll (ll - 1) 1/(3 ll t2 + t^3 z));(**

N[Exp[Log[ll]/ll],pr]**)x, {l, 0, tsize - 1}], {j, 0,

cores - 1}, Method -> "EvaluationsPerKernel" -> 32]];

ctab = ParallelTable[Table[c = b - c;

ll = start + l - 2;

b *= 2 (ll + n) (ll - n)/((ll + 1) (2 ll + 1));

c, {l, chunksize}], Method -> "EvaluationsPerKernel" -> 16];

s += ctab.(xvals - 1);

start += chunksize;

st = SessionTime[] - T0; kc = k*chunksize;

ti = (st)/(kc + 10^-4)*(n)/(3600)/(24);

If[kc > 1,

Print[kc, " iterations done in ", N[st, 4], " seconds.",

" Should take ", N[ti, 4], " days or ", N[ti*24*3600, 4],

"s, finish ", DatePlus[ds, ti], "."]];, {k, 0, end - 1}];

N[-s/d, pr]];

t2 = Timing[MRB = expM[prec];]; Print["Finished on ",

DateString[], ". Proccessor time was ", t2[[1]], " s."];

Print["Enter MRBtest2 to print ", Floor[Precision[MRBtest2]],

" digits"];

(Start time is )^2Tue 23 Apr 2019 06:49:31.

Iterations required: 132026

Will give 65 time estimates, each more accurate than the previous.

Will stop at 133120 iterations to ensure precsion of around 100020 decimal places.

Denominator computed in 17.2324041s.

...

129024 iterations done in 1011. seconds. Should take 0.01203 days or 1040.s, finish Mon 22 Apr

2019 12:59:16.

131072 iterations done in 1026. seconds. Should take 0.01202 days or 1038.s, finish Mon 22 Apr

2019 12:59:15.

Finished on Mon 22 Apr 2019 12:59:03. Processor time was 786.797 s.

Print["Start time is " "Start time is ", ds = DateString[], "."];

prec = 100000;

(**Number of required decimals.*.*)ClearSystemCache[];

T0 = SessionTime[];

expM[pre_] :=

Module[{lg, a, d, s, k, bb, c, end, iprec, xvals, x, pc, cores = 16(*=

4*number of physical cores*), tsize = 2^7, chunksize, start = 1,

ll, ctab, pr = Floor[1.0002 pre]}, chunksize = cores*tsize;

n = Floor[1.32 pr];

end = Ceiling[n/chunksize];

Print["Iterations required: ", n];

Print["Will give ", end,

" time estimates, each more accurate than the previous."];

Print["Will stop at ", end*chunksize,

" iterations to ensure precsion of around ", pr,

" decimal places."]; d = ChebyshevT[n, 3];

{b, c, s} = {SetPrecision[-1, 1.1*n], -d, 0};

iprec = pr/2^6;

Do[xvals = Flatten[ParallelTable[Table[ll = start + j*tsize + l;

lg = Log[ll]/(ll); x = N[E^(lg), iprec];

pc = iprec;

While[pc < pr, pc = Min[4 pc, pr];

x = SetPrecision[x, pc];

xll = x^ll; z = (ll - xll)/xll;

t = 2 ll - 1; t2 = t^2;

x =

x*(1 + SetPrecision[4.5, pc] (ll - 1)/

t2 + (ll + 1) z/(2 ll t) -

SetPrecision[13.5, 2 pc] ll (ll - 1)/(3 ll t2 + t^3 z))];

x - lg, {l, 0, tsize - 1}], {j, 0, cores - 1},

Method -> "EvaluationsPerKernel" -> 16]];

ctab = ParallelTable[Table[c = b - c;

ll = start + l - 2;

b *= 2 (ll + n) (ll - n)/((ll + 1) (2 ll + 1));

c, {l, chunksize}], Method -> "EvaluationsPerKernel" -> 16];

s += ctab.(xvals - 1);

start += chunksize;

st = SessionTime[] - T0; kc = k*chunksize;

ti = (st)/(kc + 10^-10)*(n)/(3600)/(24);

If[kc > 1,

Print[kc, " iterations done in ", N[st - stt, 4], " seconds.",

" Should take ", N[ti, 4], " days or ", ti*3600*24,

"s, finish ", DatePlus[ds, ti], "."],

Print["Denominator computed in ", stt = st, "s."]];, {k, 0,

end - 1}];

N[-s/d, pr]];

t2 = Timing[MRBeta2toinf = expM[prec];]; Print["Finished on ",

DateString[], ". Processor and total time were ",

t2[[1]], " and ", st, " s respectively."];

Start time is Tue 23 Apr 2019 06:49:31.

Iterations required: 132026

Will give 65 time estimates, each more accurate than the previous.

Will stop at 133120 iterations to ensure precision of around 100020 decimal places.

Denominator computed in 17.2324041s.

...

131072 iterations done in 2589. seconds. Should take 0.03039 days or 2625.7011182s, finish Tue 23 Apr 2019 07:33:16.

Finished on Tue 23 Apr 2019 07:32:58. Processor and total time were 806.5 and 2606.7281972 s respectively.

MRBeta1 = EulerGamma Log[2] - 1/2 Log[2]^2

EulerGamma Log[2] - Log[2]^2/2

N[MRBeta2toinf + MRBeta1 - MRB, 10]

1.307089967*10^-99742

Attachments:

Attachments: