Thanks. I had misinterpreted the Output decoder as actually creating an output layer. Using the above network seems to generating an error when attempting to train the network.

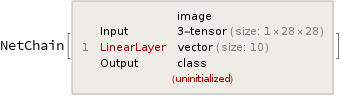

testNet =NetChain[{LinearLayer[10]},

"Input" -> NetEncoder[{"Image", {28, 28}, "Grayscale"}],

"Output" -> NetDecoder[{"Class", Range[0, 9]}]]

myResource = ResourceObject["MNIST"];

trainingData = ResourceData[myResource, "TrainingData"];

testData = ResourceData[myResource."TestData"];

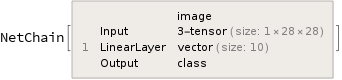

testNet = NetInitialize[testNet]

trainedNet =

NetTrain[testNet, trainingData, BatchSize -> 1000,

MaxTrainingRounds -> 1]

This generates the error...

NetTrain::invindim: Data provided to port "Output" should be a list of length-10 vectors.

A simple validation to check if the net is receiving data and output an expected type appears correct.

testNet[Keys[trainingData[[1]]]]

1

Obviously the network is not trained so the output digit may be incorrect but it is a scalar in our range 0-9.

Do you have any ideas why the it believes the output data is not of the correct length?