What do you think of the idea of automatically judging if a piece of data was beautiful? This could mean the data in an image (ImageData) or maybe the result of a computation (e.g. CellularAutomaton), or anything, although I am thinking of a list or an array of numbers primarily.

My first thought was that there are many filters for image processing, but I don't know which might be useful. The next thing I think of is mathematical transforms. For example, taking the Fourier or Hadamard transform you expect the coefficients to decay, and if they don't then that would not be nice.

This code deletes the constant term and does some measure of the variance, using Mean as a shortcut to counting the 0's and 1's, those closer to the min than the max respectively without knowing the length or dimension. (Note Fourier does not assume the size is a power of 2 but Hadamard does.)

FourierBeauty[list_] := Mean[1. - Round[Rescale[Abs[Rest[Flatten[Fourier[list]]]]]]]

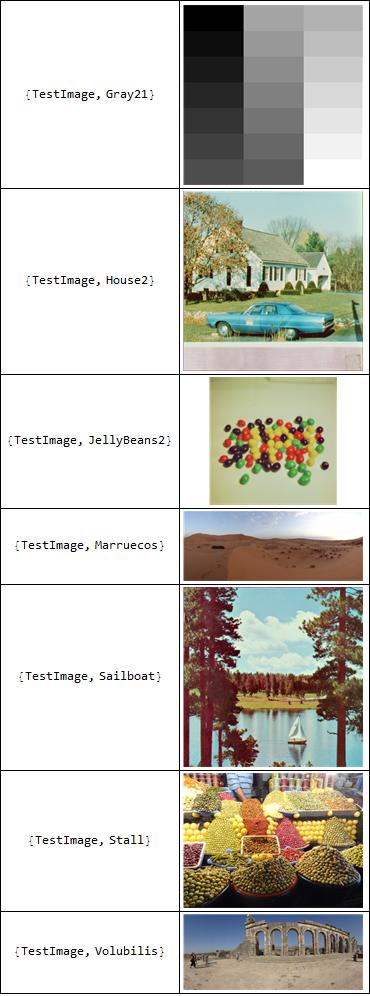

Maybe for an image this might not be bad. Here is what it picks out of the ExampleData test images:

Grid[{#, ExampleData[#]} & /@

MaximalBy[ExampleData["TestImage"],

FourierBeauty[

ImageData[

Binarize[

ImageResize[

ColorConvert[ExampleData[#], "Grayscale"], {64, 64}]]]] &],

Frame -> All]

but here are the CAs it likes the most.

MaximalBy[Range[0, 255],

Sum[FourierBeauty[ CellularAutomaton[#, RandomInteger[1, 2^8], {{0, 2^8 - 1}}]], 100] &]->{1, 3, 5, 17, 57, 87, 119, 127}