Abstract

The purpose of this project is to create a microsite that recognizes the plane in the image that the user uploads. A neural network is employed in the microsite to provide the classification results. Since planes are complex objects, it is hard to classify them with simple neural networks, and training complex neural networks take a lot of time and computation resources. My goal is to get the highest accuracy with the least amount of computation. In order to achieve this, I used a technique called transfer learning. The concept will be introduced in the introduction.

Introduction

The concept of artificial neural networks is first introduced in the late 1940s, inspired by biological neural networks. Artificial neural networks (Hereinafter to be referred to as neural networks) have similar capabilities as the human brain, can be trained and is good at tasks like image-recognition, which is the basis of this project. There exists a kind of node structure called artificial neurons in a neural network, which simulates neurons in the biological brain. However, they function differently. An artificial neuron receives a signal from the previous layer, and outputs a signal that is computed by a function contained in the neuron as the output signal. These functions contained in the artificial neurons will be determined by training. Training is a process that, the neural network is provided a number of correct examples called the training set to program the nodes, and then another group of examples are provided to validate the programmed neurons and prevent overfitting.

Neural networks have a lot of advantage comparing to classical programming: It is simple to design and train, and one neural net model can be trained to complete different but similar task. However, itself is bad at doing computations, the amount of computations required is much more than classical programming (In classical programming more computations are done in your brain). In my project, the object are pictures of planes, which are complex and require a large amount of computation. Therefore, I used a technique called transfer learning to reduce computation needed. Transfer learning uses a network trained on one task to complete another similar task. [1]Since the features need to be noticed in each task is similar, the accuracy will not be greatly lower than a newly-trained network. With Transfer learning, I am able to train large datasets on my laptop which has limited computing power.

The Code

images747 =

ConformImages[

Import /@ FileNames["Downloads/256-747/*.jpg"], {256, 256}];

images737 =

ConformImages[

Import /@ FileNames["Downloads/256-737/*.jpg"], {256, 256}];

images707 =

ConformImages[

Import /@ FileNames["Downloads/256-707/*.jpg"], {256, 256}];

First we import the images, and conform them to 256*256. This image size kept the most amount of information while lowering the resolution (and therefore size) of each image, to allow a larger dataset.

baseNet = NetModel["ResNet-50 Trained on ImageNet Competition Data"]

We used the ResNet-50 as the base of our network. ResNet-50 is designed and trained to recognize the objects in an image. Due to machine limitations, we used the method of transferred learning, which reuses the ResNet-50's feature recognizing part as the basis of the new neural net.

clippedNet = NetTake[baseNet, 23]

The first 23 layers of ResNet-50 extracts the features of the object in the picture. We take these layers out as the basis of our neural net.

resizedNet =

NetReplacePart[clippedNet,

"Input" -> NetEncoder[{"Image", {256, 256}}]]

Now we replace the size of the input of the neural net to 256*256.

feats747 = resizedNet[images747];

feats737 = resizedNet[images737];

feats707 = resizedNet[images707];

Now we apply the clipped net to the set of images, the result will be used to train a linear layer, which relates the results of the resized net to different categories of planes.

feats = RandomSample[

Join[Map[# -> 0 &, feats747], Map[# -> 1 &, feats737],

Map[# -> 2 &, feats707]]];

trainFeat = feats[[1 ;; Floor[0.75 Length[feats]]]];

testFeat = feats[[Floor[0.75 Length[feats]] + 1 ;; Length[feats]]];

The features are mapped to the categories, and 3/4 of the features data are put into training data, and the rest are put into the testing data set.

learnNet = NetChain[{

DropoutLayer[0.3],

LinearLayer[3],

SoftmaxLayer[]

},

"Input" -> 8192,

"Output" -> NetDecoder[{"Class", {0, 1, 2}}]

]

A short, 3-layer neural net model is created.

trained =

NetTrain[learnNet, trainFeat, ValidationSet -> testFeat,

MaxTrainingRounds -> 35]

This net model is trained to get the categories from the features.

combinedNet = NetChain[{

resizedNet,

trained

}]

The trained net model trained is combined with the feature-recognizing net clipped from ResNet-50.

Recognize =

NetReplacePart[combinedNet,

"Output" -> NetDecoder[{"Class", {747, 737, 707}}]]

The final part of the neural net, originally set as classes 0, 1, and 2, is now replaced by the specific categories of planes.

Export["Desktop/Boeing-v2.wlnet", Recognize];

The neural net is exported to the desktop as a .wlnet file.

flickr = ServiceConnect["Flickr"]

"flickr" has been defined as the service Flickr.

results =

flickr["ImageSearch", {"Keywords" -> {"Boeing 747"},

"Elements" -> "Images", MaxItems -> 100}];

Random images of Boeing 747 are found on flickr.

Recognize = Import["Desktop/Boeing.wlnet"]

Counts@Recognize[results]

CopyFile["Desktop/Boeing-v2.wlnet", CloudObject["Boeing-v2.wlnet"]]

CopyFile["Desktop/Boeing.wlnet", CloudObject["Boeing.wlnet"]]

The neural net file is copied to the cloud, to allow faster evaluation.

form1 = FormPage[{"image" -> "Image"},

Module[{p = Import["Boeing-v2.wlnet"][#image , "Probabilities"],

i1 =Import[

CloudObject["https://www.wolframcloud.com/objects/billy.li/747,PNG"]], i2 =Import[

CloudObject["https://www.wolframcloud.com/objects/billy.li/737,PNG"]], i3 =Import[

CloudObject["https://www.wolframcloud.com/objects/billy.li/707,PNG"]], res},

res = First[Keys[MaximalBy[p, Identity]]];

Column[{"<h2 style='margin-bottom:40px' class='section \

form-title'>Your Result:<h2/>",

If[res == 747, i1, If[res == 737, i2, i3]],

Row[{"<h2 style='margin-bottom:40px' class='section \

form-title'>" <> "Boeing " <> ToString@res <> "<h3/>"}],

"<h4 style='margin-bottom:50px' class='section form-title'>" <>

ToString@

If[res == 747, p[[1]], If[res == 737, p[[2]], p[[3]]]] <>

"</h4>", "Probabilities:",

Grid[{{"Boeing 747", "Boeing 737", "Boeing 707"}, {p[[1]],

p[[2]], "Not Supported"}}],

Hyperlink[

"Result is incorrect? Submit the image to this website.",

"https://www.wolframcloud.com/objects/billy.li/Error"]}]

] &,

AppearanceRules -> <|"Title" -> "Boeing Plane Classifier",

"Description" ->

"Upload the picture you took at the airport of your flight(This \

version does not support Boeing 707)",

"SubmitLabel" -> "Classify this plane"|>, PageTheme -> "Blue"];

This is the version with only Boeing 737 and 747 supported, which is more accurate. i1, i2 and i3 are images of the planes that I chose for examples.

form2 = FormPage[{"image" -> "Image"},

Module[{p = Import["Boeing-v2.wlnet"][#image , "Probabilities"],

i1 =Import[

CloudObject["https://www.wolframcloud.com/objects/billy.li/747,PNG"]], i2 =Import[

CloudObject["https://www.wolframcloud.com/objects/billy.li/737,PNG"]], i3 =Import[

CloudObject["https://www.wolframcloud.com/objects/billy.li/707,PNG"]], res},

res = First[Keys[MaximalBy[p, Identity]]];

Column[{"<h2 style='margin-bottom:40px' class='section \

form-title'>Your Result:<h2/>",

If[res == 747, i1, If[res == 737, i2, i3]],

Row[{"<h2 style='margin-bottom:40px' class='section \

form-title'>" <> "Boeing " <> ToString@res <> "<h3/>"}],

"<h4 style='margin-bottom:50px' class='section form-title'>" <>

ToString@

If[res == 747, p[[1]], If[res == 737, p[[2]], p[[3]]]] <>

"</h4>", "Probabilities:",

Grid[{{"Boeing 747", "Boeing 737", "Boeing 707"}, {p[[1]],

p[[2]], p[[3]]}}],

Hyperlink[

"Result is incorrect? Submit the image to this website.",

"https://www.wolframcloud.com/objects/billy.li/Error"]}]

] &,

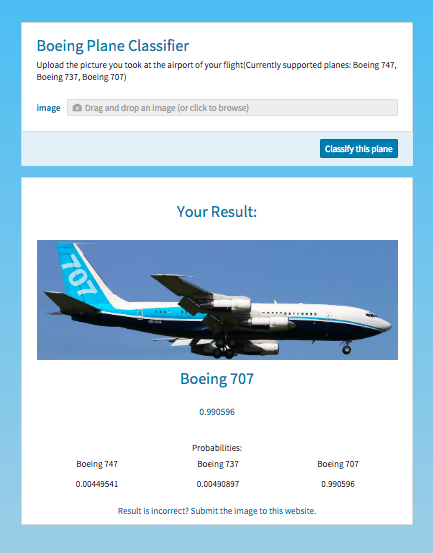

AppearanceRules -> <|"Title" -> "Boeing Plane Classifier",

"Description" ->

"Upload the picture you took at the airport of your \

flight(Currently supported planes: Boeing 747, Boeing 737, Boeing \

707)", "SubmitLabel" -> "Classify this plane"|>, PageTheme -> "Blue"];

This is the version with Boeing 737, 747 and 707 supported. It is less accurate. The neural net is only evaluated once in this form page, to save evaluation time.

CloudDeploy[form1, "Boeing-v1", Permissions -> "Public"]

CloudDeploy[form2, "Boeing-v2", Permissions -> "Public"]

The page is deployed with public permissions.

form1 = FormPage[{"image" -> "Image"},

Module[{}, SendMail["2945153481@qq.com", #image];

"Message Sent. Thanks for your Support."] &];

The page sends the inserted image to the email address 2945153481@qq.com.

CloudDeploy[form1, "Error", Permissions -> "Public"]

The page is deployed in cloud with public permissions.

Conclusion

With a decent amount of training data and testing data, a neural network can be really accurate. When transfer learning is also employed properly, the accuracy of the classification can be greatly increased with the same amount of computation. In the future, expect increasing the amount of training and testing data, here are a few ways that this project can improve: First, First recognize the direction the photo is taken, then use the neural net specifically trained on that direction to classify. This will eliminate the error described above due to nonuniformity of the training set. Second, first use a neural network to determine which airlines the plane is, then exclude all planes that the airlines doesn't have. This will show its effect when the number of categories increase. Here are the links to the Microsites (you can find the link of the error report site in these sites): https://www.wolframcloud.com/objects/billy.li/Boeing-v1

https://www.wolframcloud.com/objects/billy.li/Boeing-v2

Many Thanks to Andrea Griffin, Richard Hennigan, Chip Hurst, Michael Kaminsky, Rob Morris for your support on the project (sorted by last names, not by level of thanks!)

References

- H.-C. Shin et al., Deep Convolutional Neural Networks for Computer-Aided Detection: CNN Architectures, Dataset Characteristics and Transfer Learning., IEEE Trans. Med. Imaging, vol. 35, no. 5, pp. 128598, 2016.

Attachments:

Attachments: