Dabrowski, I do not know if this is what you will expect, but for your information.

(* training data *)

in1 = Table[RandomReal[], {100}, {2}];

in2 = Table[RandomReal[], {100}, {3}];

val = Table[RandomInteger[{1, 10}], {100}];

traingdata = (Association[{"In1" -> #[[1]], "In2" -> #[[2]],

"Val" -> #[[3]]}] & /@ Transpose[{in1, in2, val}]);

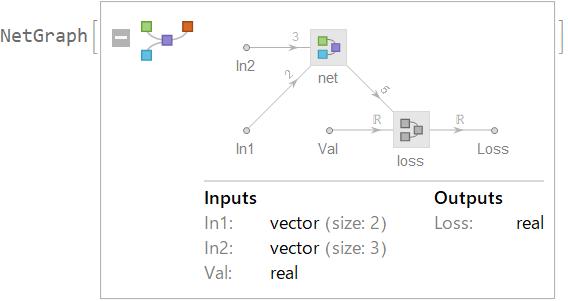

(* define network *)

net = NetGraph[{LinearLayer[5, "Input" -> 2],

LinearLayer[5, "Input" -> 3]}, {NetPort["In1"] ->

1 -> NetPort["Out1"], NetPort["In2"] -> 2 -> NetPort["Out2"]}];

loss = NetGraph[{ThreadingLayer[(#1 + #2) &], SummationLayer[],

MeanSquaredLossLayer[]},

{{NetPort["Out1"], NetPort["Out2"]} ->

1 -> 2, {2, NetPort["Val"]} -> 3}];

lossNet =

NetGraph[{"net" -> net,

"loss" -> loss}, {NetPort["net", "Out1"] ->

NetPort["loss", "Out1"],

NetPort["net", "Out2"] -> NetPort["loss", "Out2"]}];

(* training *)

results = NetTrain[lossNet, traingdata]