Goal

Squash is a racket sport played in a four-walled court with a soft ball. The goal of this project is to recognize the positions of the players and the ball at every frame of a recorded squash game. This is obviously useful for gathering all kinds of statistics about a game, and the strategy and tactics of individual players. This task is challenging because the ball might be blurry, and only a few pixels in size in any given frame, and it is sometimes obscured by the players. The players also constantly cross over each other and often take up the same space in the frame, making it harder to track them individually. The existing solutions to these problems either involve slow deep learning algorithms or multiple perfectly set up cameras. Here I propose an almost entirely non-ML approach to tracking the ball and a simple color-based algorithm for tracking the players.

Implementation

Tracking the ball

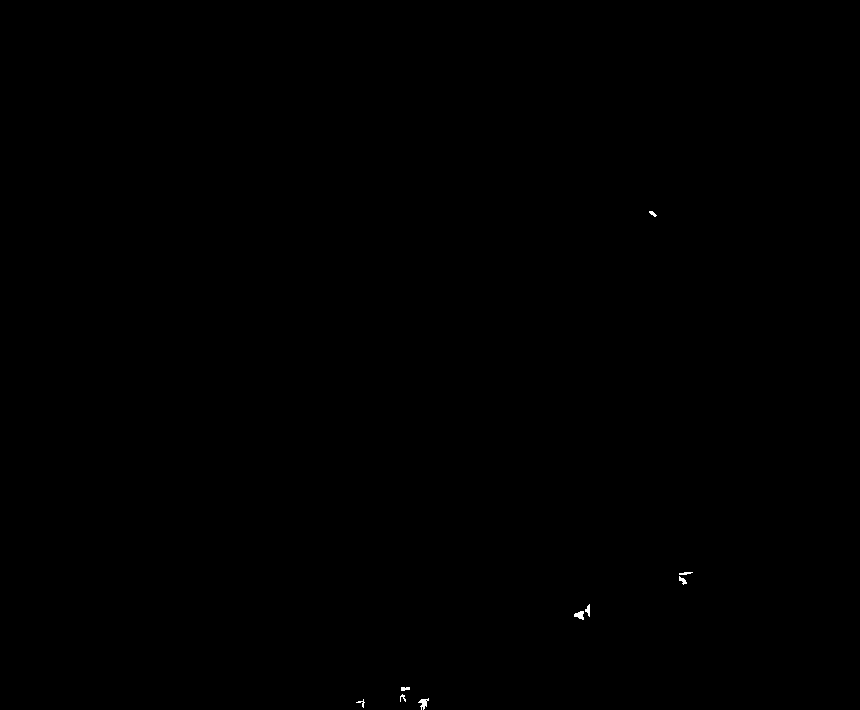

The algorithm starts with a frame like this:  The frame is binarized, normalized, and then binarized again with a very high threshold. This removes all of the stationary elements from each frame. After that, the algorithm puts detects the players and puts black boxes around them, and after a round of filtering and denoising we get an image:

The frame is binarized, normalized, and then binarized again with a very high threshold. This removes all of the stationary elements from each frame. After that, the algorithm puts detects the players and puts black boxes around them, and after a round of filtering and denoising we get an image:

binimages = Binarize[#, initialbin] & /@ images;

scaledimgs = ImageMultiply[#, 1/frames] & /@ binimages;

imgmask = ImageAdd[scaledimgs];

maskedimgs = ImageDifference[#, imgmask] & /@ binimages;

boxedimgs =

Table[Image[

Show[maskedimgs[[n]],

Graphics[{Opacity[1], Black,

ImageBoundingBoxes[images[[n]],

Entity["Concept", "Person::93r37"],

AcceptanceThreshold -> humandetect]}]]], {n, frames}];

imgbins =

Table[DeleteSmallComponents[

TotalVariationFilter[Binarize[boxedimgs[[n]], finalbin]],

smallcomponents], {n, frames}];

This is all of the processed images layered on top of each other:

This is all of the processed images layered on top of each other:  A human can clearly see the trajectory of the ball, but there is also a lot of noise that comes from players' body parts and rackets sticking out of their bounding box. However, there are still noticeable patterns that distinguish the ball from other objects. If there is an object in the top part of the image, it's probably the ball. If the ball is the left side, for example, it's probably going to be the leftmost element, vice versa for the right side. Using these simple heuristics the ball can be detected in a high percentage of the frames and with little error.

A human can clearly see the trajectory of the ball, but there is also a lot of noise that comes from players' body parts and rackets sticking out of their bounding box. However, there are still noticeable patterns that distinguish the ball from other objects. If there is an object in the top part of the image, it's probably the ball. If the ball is the left side, for example, it's probably going to be the leftmost element, vice versa for the right side. Using these simple heuristics the ball can be detected in a high percentage of the frames and with little error.

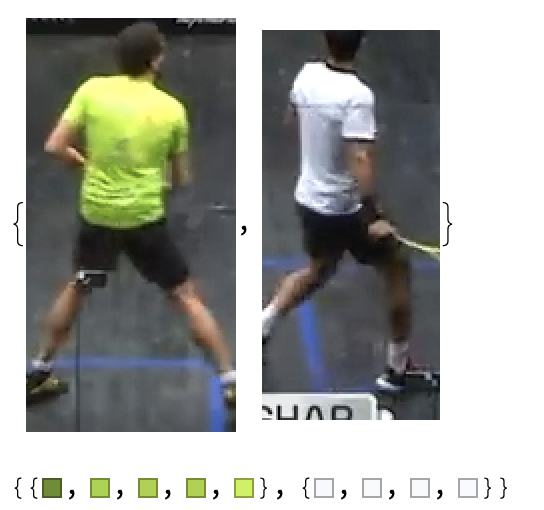

Tracking the players Initially, I attempted to track the players using ImageFeatureTrack, but because the players cross over and turn almost constantly all of the points that the function was tracking were lost in the span of a few frames. Instead, I decided to track the players using the colors of their t-shirts. I used ImageCases to get the crop of each player and then ran DominantColors. After that, I deleted the common colors and ended up with these reference color schemes:

pplnum = Table[

Length[ImagePosition[images[[n]],

Entity["Concept", "Person::93r37"],

AcceptanceThreshold -> humandetect]], {n, frames}];

player1 =

Union[Flatten[

Table[DominantColors[

ImageCases[images[[n]], Entity["Concept", "Person::93r37"],

AcceptanceThreshold -> humandetect][[1]],

MinColorDistance -> 0.3], {n, {1, 2, 3, 5}}]]];

player2 =

Union[Flatten[

Table[DominantColors[

ImageCases[images[[n]], Entity["Concept", "Person::93r37"],

AcceptanceThreshold -> humandetect][[2]],

MinColorDistance -> 0.3], {n, {1, 2, 3, 5}}]]];

common = DeleteDuplicates[

Flatten[Table[

Table[If[

ColorDistance[player1[[c2]], player2[[c1]]] <

0.3, {player2[[c1]], player1[[c2]]}], {c1,

Length[player2]}], {c2, Length[player1]}]]] /.

Null -> Sequence[];

sortedplayer1 = Select[player1, ! MemberQ[common, #] &];

sortedplayer2 = Select[player2, ! MemberQ[common, #] &];

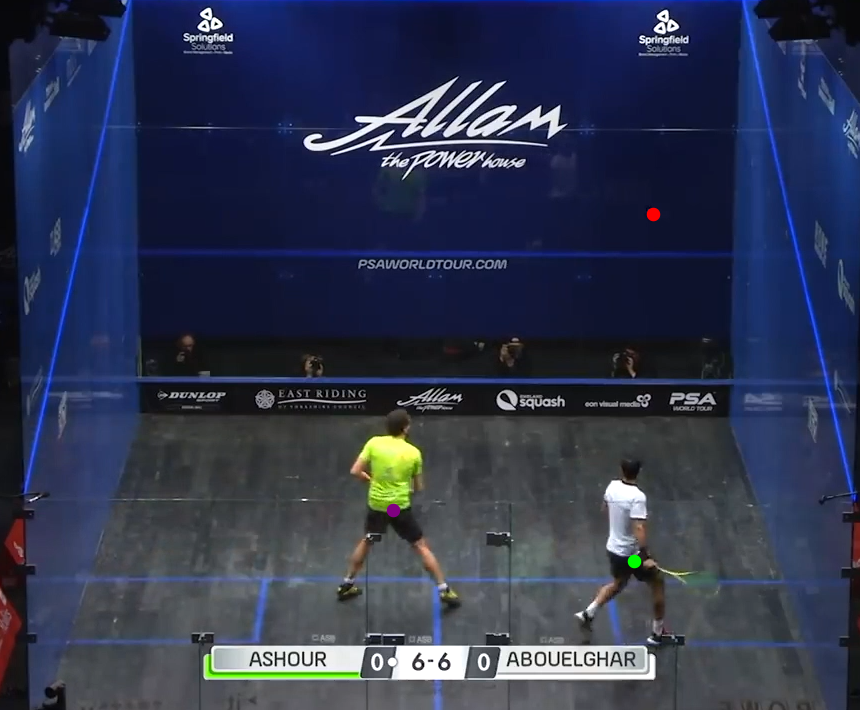

Then, for each frame, I determined which ImageCase matched which reference color scheme using ColorDistance. Putting the tracking of the ball and the players together we get something like this:

The players can be reliably tracked in every frame and the ball is accurately tracked in almost 70% of all frames.

Shortcomings

Though the image processing algorithm with default parameters works fairly well for all courts, areas of the image where the ball is likely to be need to be redefined for footage of different tournaments, because of the variance in camera angles (if the camera is closer to the ground, the players be covering more of the front wall).

Future Directions

In the future, I would like to use this data to produce a log of shots for a game, with timestamps, and then use that data to analyze the strategies of different players.