Introduction

For the past few decades, the Communist Party of Chinas (CPC) efforts in censorship has abridged its citizens ability to exercise their human rights of opinion and expression for a variety of social, religious, and political reasons. These efforts are realized in numerous of ways, from the use of military force to shut down peaceful protests in the Tiananmen Square Massacre to the detainment of political and religious prisoners. However, recently, the internet, along with other technologies, has begun to foster the exchange of ideas digitally, such as through increasing access to news and providing online forums for discussion of nearly any topic, perhaps paving the way to a freer China. Unfortunately, the liberalizing effects of the internet have been limited by online censorship. In this project, I seek to shed light on the intricacies of the "Great Chinese Firewall", which is used by the CPC to filter and block objectionable websites, by creating tools to predict and test which websites are censored and uncover which issues are of concern to the Chinese government through analyzing censored web domains.

Domain Accessibility Functions

During the course of this project, I created a couple methods of testing the accessibility of a domain: via the native Mathematica PingTime and HTTPRequest functions, connected to the University of Tsubaka's China VPN, and 3rd party websites such as viewdns.info and ping.pe:

IsAccessiblePing[url_] := MatchQ[Head[PingTime[url]], Quantity] // Quiet

IsAccessibleHTTP[url_] :=

Check[If[URLRead[HTTPRequest[url]]["StatusCode"] < 300, True, False] ,

False] // Quiet

session = StartExternalSession["WebDriver-Chrome-Headless"]

IsAccessiblePingPe[url_, timewindow_: 7] := Block[{},

ExternalEvaluate[session, "OpenWebPage" -> "http://ping.pe/" <> url];

Pause[timewindow];

If[Mean[ToExpression[

StringReplace[

ExternalEvaluate[session,

"ElementText" ->

First@ExternalEvaluate[session,

"LocateElements" -> <|"Id" -> "ping-CN_" <> # <> "-loss"|>]] & /@

ToString /@ {2, 3, 4, 5, 6, 11, 12, 13, 200}, "%" -> ""]]] < 50,

True, False] // Quiet

]

IsAccessibleViewDNS[url_] :=

StringContainsQ[

Import["https://viewdns.info/chinesefirewall/?domain=" <> url],

"site should be accessible from within mainland China"] // Quiet

After some testing, I found that the IsAccessiblePing and IsAccessiblePingPe methods were inaccurate due to the method of which China implements their filter. Packets sent by pings were often lost even while not connect to the Chinese Firewall rendering the functions useless. Notably, those two functions returned "inaccessible" when fed the domain www.wolfram.com, though it clearly accessible. While the HTTPSRequest was functional and accurate when connected to the VPN, the slow speeds of the public VPN made it unfeasible to employ across larger datasets. In the future, I would like to set up a private Chinese server specifically for HTTPRequest for greater speeds, though in the meantime, I utilized to using the website, viewdns.info 's 3rd party service.

Testing Google Domain Sites Given Search Query

gg = ServiceConnect["GoogleCustomSearch", "New"]

GoogleQueryDomains[query_, n_: 5] :=

DeleteDuplicates[

URLParse[#]["Domain"] & /@

Normal[gg["Search", {"Query" -> ToString[query], MaxItems -> n}][[All,

"Link"]]]]

PercentCensored[query_String, m___Integer, function_Symbol, n___Integer] :=

Block[{urlList = GoogleQueryDomains[query, m]},

N[Length[UnaccessibleWebsites[urlList, function]]/Length[urlList] *100

]]

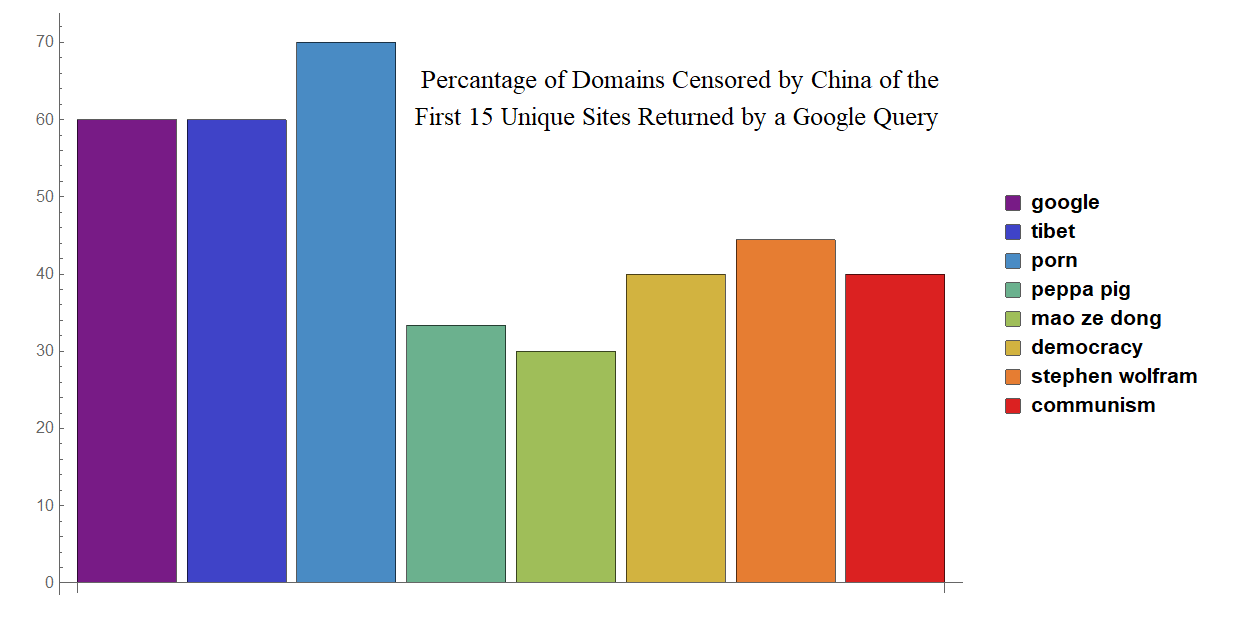

The above functions help reveal the severity of censorship in different topics. The GoogleQueryDomains takes a query and returns a list of unique domain names taken from Google's Custom Search Engine API. The PercentBlocked inputs those domains and returns the percentage of the websites that are blocked. The results generated from these functions generated the following bar plot:

Predicting Accessibility Through Machine Learning

ChinaDataset =

Import["C:\\Users\\User1\\Desktop\\ookami\\ChinaDataset.xlsx"][[1]];

TrainingData =

Table[Rule[ChinaDataset[[n]][[4]], ChinaDataset[[n]][[3]]], {n, 2,

Length[ChinaDataset]}];

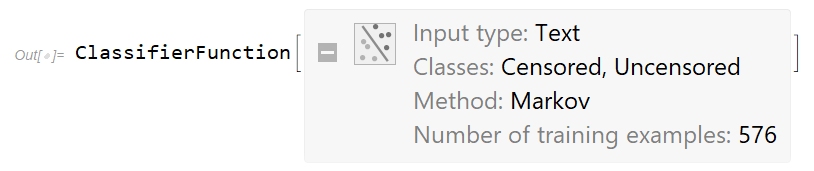

MarkovClassifier = Classify[TrainingData]

PredictCensored[query_] := Block[{plaintext},

plaintext = WikipediaData[query];

If[plaintext == Missing["NotAvailable"],

Print["Wikipedia Article Not found"];

Return[]];

MarkovClassifier[plaintext]]

I compiled my training set used to train Markov Classifier by scraping the Alexa domain list and accessibility status from the GreatFire Project and each domain's corresponding Wikipedia pages imported with the WikipediaData. The PredictCensored function, takes a website, attempts to find it's Wikipedia page, and if it finds it, puts that text through the Markov Classifier and returns "Censored" or "Uncensored". Due to the implementation, this Classifier is limited in that it can only accept inputs of websites with Wikipedia pages.

Domain Text Analysis

CensoredSites = Flatten[Part[ChinaDataset[[All, 4]], 2 ;; 143]];

cc = 0;

Monitor[wordsCen =

DeleteStopwords[c++;

Once[Select[TextWords[#],

StringMatchQ[#, LetterCharacter ..] &]]] & /@ CensoredSites;,cc];

reg = DiscretizeGraphics[

Entity["Country", "China"]["Polygon"]];

wordplot = WordCloud[Flatten[wordsCen], reg, MaxItems -> 150]

This above code outputs the word cloud shaped like China which begins this post. It is essentially a agglomeration of common words that reoccur across the Wikipedia pages of censored domains. Through this visualization, I saw a clear connection between current controversial issues in China with the types of content censored. For instance, the recurrence of the words "Falun Gong", a suppressed religious group, within the censored domains reflect the debate, or lack thereof, in regards to religious freedom in China. The prominence of Google in this diagram reflects not only the scale of censorship towards the company, but also it's prevalence in the internet, further emphasizing the extent to which this firewall alters the internet ecosystem in for Chinese citizens.

Closing Remarks

In conclusion, this project was surprisingly much more difficult than I initially imagined, but still extremely insightful for me, having dealt with the Chinese Firewall firsthand. My preconceptions that if I can tunnel past the firewall when I visit then it must be relatively simple to is it to tunnel back in. The difficulty in accurately testing the accessibility of websites in China, as illustrated by the numerous functions and approaches I took, forced me to take this project into different directions such as with the Classifier.

Lastly, I would like to thank my counselors Christian and Chip for their patience and help. Without Christian, this whole project probably wouldn't of happened, and without Chip, the pretty picture of China wouldn't of had that black border.

Attachments:

Attachments: