Actually this seems to work for me, in spite of the red syntax coloring!

rdata = ResourceData["State of the Union Addresses"]

data = rdata[All, {"Date", "Text", "Age"}]

sample = IntegerPart[.9*Length[data]]

trainingDataset = RandomSample[data, sample];

testDataset = Complement[data, trainingDataset];

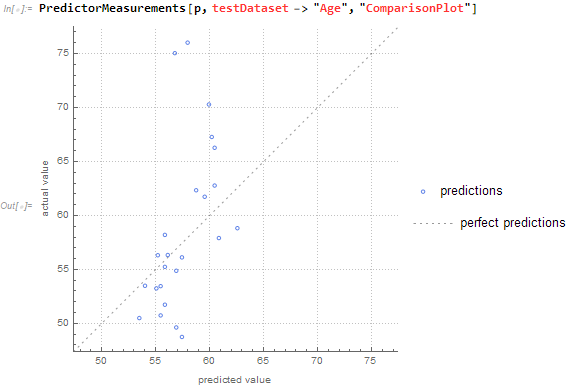

p = Predict[trainingDataset -> "Age"]

PredictorMeasurements[p, testDataset -> "Age", "ComparisonPlot"]

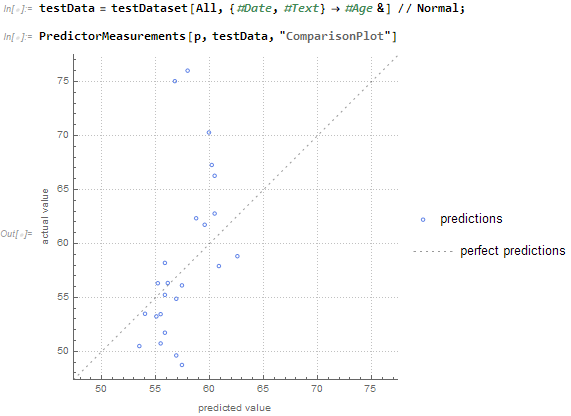

Or by transforming the Dataset:

tData = data[All, {#Date, #Text} -> #Age &] // Normal;

trainingData = RandomSample[tData, sample];

testData = Complement[tData, trainingData];

p = Predict[trainingData]

PredictorMeasurements[p, testData, "ComparisonPlot"]

From the same test dataset and predictor function as above, it gives the same result: